On July 15th, Ujjwala Kashkari, Director of Sales for OhmniLabs, co-organized the launch event for Women in AI, a global non-profit working towards gender inclusive AI with Swathi Young, founder of DC Emerging Technologies. DC Emerging Technologies is a premier event organizer in the Washington DC Metro area that connects enthusiasts and experts in the emerging technologies. The group meets to share the latest trends and best practices for enterprises wanting to utilize Internet of Things (IoT), 3D Printing, Autonomous Vehicles and Aircrafts, Robotics, AI, Machine Learning and other disruptive technologies.

The launch event, “Explainable AI: A peek into the brains behind AI,” brought together AI industry experts to discuss the need for AI systems to be transparent and how regulated industries can use explainable AI to reduce risk of automated decision-making. Below is a recap of the event written by Swathi.

What the heck is Explainable (XAI) AI and why do we need it?

I was super excited to host our first Women in AI Talk aka #WaiTalk in Washington.D.C. last week.

A little bit about Women in AI. Women in AI is a non-profit whose mission is to increase women representation towards a gender-inclusive AI that benefits global society. It currently has members from 90+ countries ranging from Bahrain to Algeria. Their Slack channel is the ultimate repository of all resources in the world of AI.

Our panel discussion was aptly titled, Explainable AI: A peek into the brains behind AI.

AI today helps us solve complex problems except with one challenge. AI solution is usually a “Black Box” that makes intelligent decisions. These decisions can sometimes be at the expense of human health and safety. There is a need for AI systems to be transparent about the reasoning it uses to increase trust, clarity, and understanding of these applications.

Explainable AI provides insights into the data, variables and decision points used to make a recommendation.

In this session, we took a look at how regulated industries like financial institutions and healthcare are using explainable AI to reduce the risk of automated decision-making.

Here are five important points discussed during this event:

-

What Is Explainable Artificial Intelligence and is there a need for it?

As machine learning is the most common use of AI, most businesses believe that machine learning models are opaque, non-intuitive and no information is provided regarding their decision-making and predictions. This challenge has been solved to a large extent by what is termed as “Explainable AI”, that explains the input variables and the decision-trees used to produce output by the models as well as the structure of the model itself. However, it all depends on the use case at hand. Do we need to explain in detail the model behavior for when a machine would break down? Maybe not. In most regulated industries as well as use cases that involve human bias, legal counsel as well as ethics, it is very important to focus on explainable AI lest we end up with many applications that produce outcomes for the wrong reasons.

-

What are some of the use cases of Explainable AI?

Most of the discussion was around healthcare, which is understandable given the concerns about HIPAA and privacy of patient information. The specific use case that was discussed involved precision medicine based on patients’ genetics, past medical history as well as family’s medical history. The other use case we discussed was in facial recognition technologies, underwriting of a loan in the financial services sector.

-

What are some of the tools and techniques that are used?

The choice for implementing machine learning models is two-fold – either out of box solutions like IBM Watson/TensorFlow or open-source tools.

The explainability of the models is possible when using open source tools as opposed to off-the-shelf solutions like Watson. The only caveat is that it depends on the business use case. If you are trying to use image recognition software then you cannot beat Tensorflow since it has been built based on millions of images that it is impossible for you to collect.

Most often, you would be dealing with a custom solution and can use open source tools like R or Pytorch.

-

DARPA (Defense Advanced Research Projects Agency) recently published the need for Explainable AI; where do you see this headed in the world of Federal Agencies?

Most of the use cases in federal agencies require explainable AI especially in DoD(Department of Defense), National Institute of Health (NIH) and Department of Homeland Security(DHS). Hence there is a push for Explainable AI made by DARPA to answer questions like how a decision was made, why did the model succeed or fail and how do they course correct? All these will ensure that understand, trust and manage the machine learning algorithms.

-

What are some challenges while trying to achieve Explainable AI?

The challenges when it comes to Explainable AI is generally linked to any machine learning/AI project. The business use case is not identified appropriately. The dataset used for machine learning will depend on the use case and hence the explainability is linked to it. In addition to that, most AI/ML models are used as recommendation engines. Hence, the output is a combination of human and machine intelligence. The mental models of understanding an individual’s decision are a challenge in certain cases.

Conclusion:

In conclusion, this first #WaiTalk event was well attended (we had over fifty participants) and generated a lot of interesting discussion points.

Here are some photos from the event.

Explainable AI is here to stay and the sooner we start incorporating it in the models we build, the faster it is for organizations to trust AI systems.

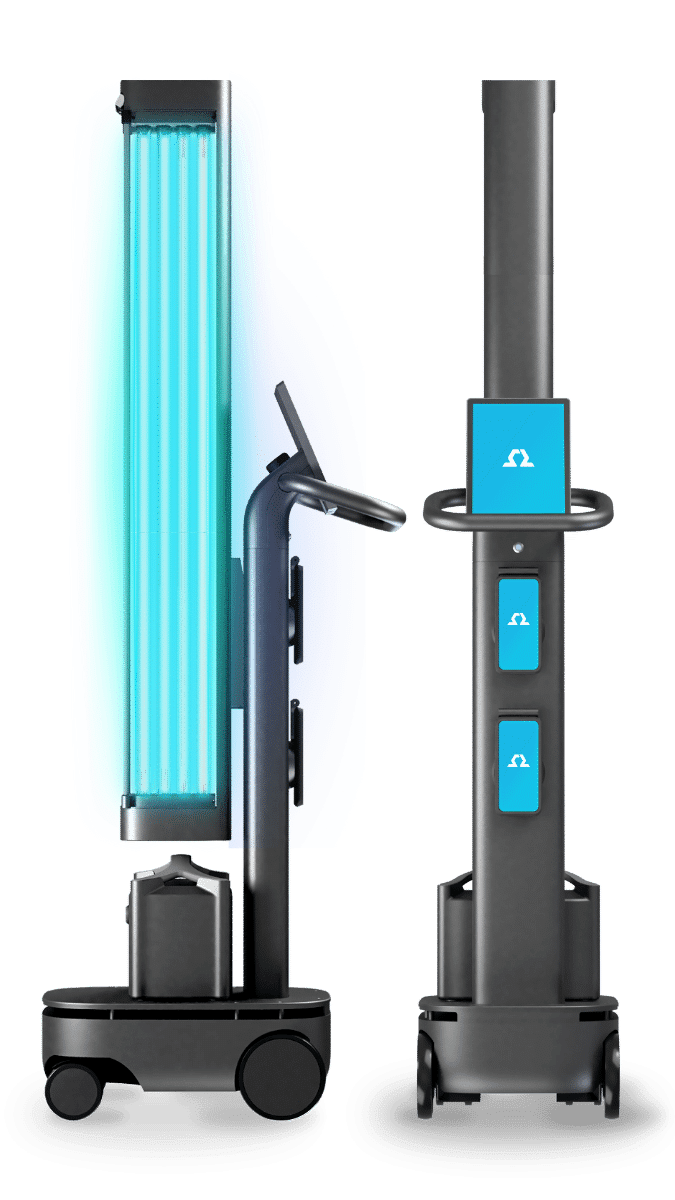

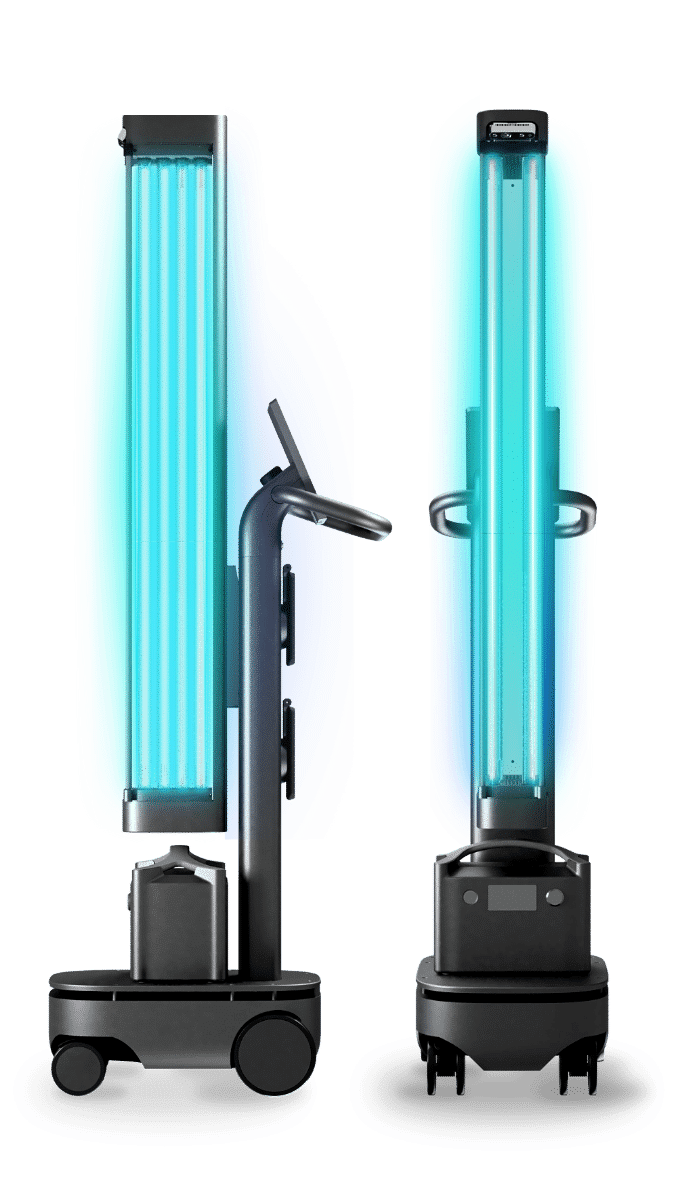

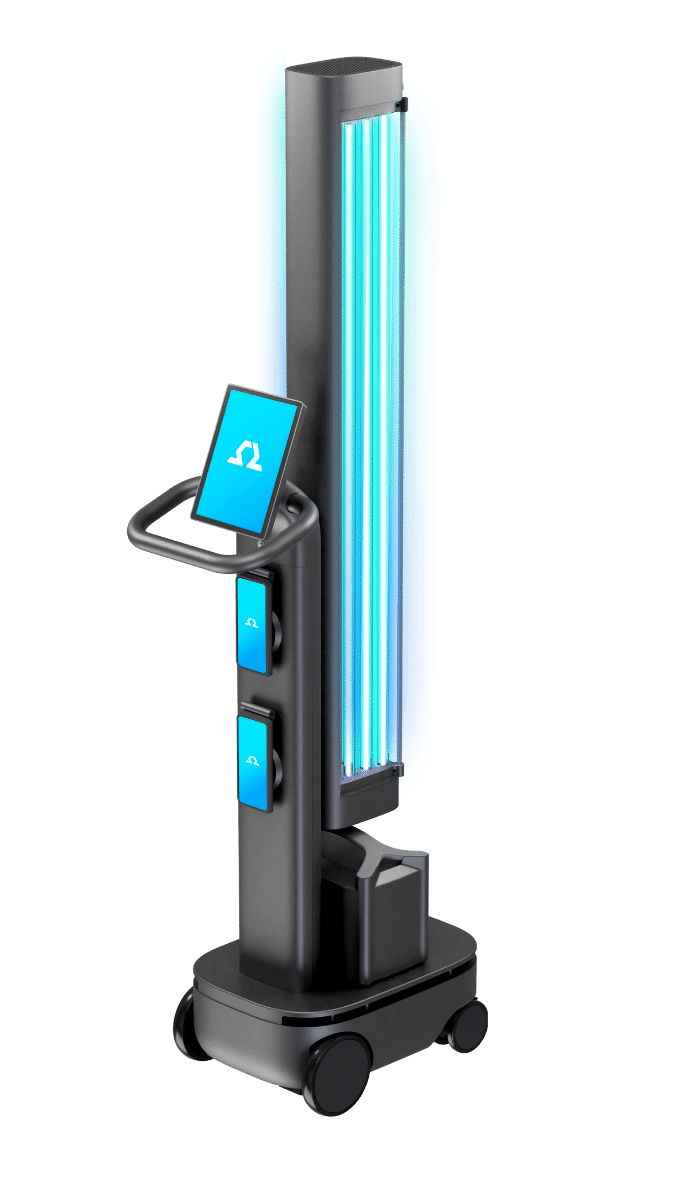

To learn more about how OhmniLabs applies additive manufacturing to build robots and to sign up for OhmniLabs’ newsletter click here.